I attended a 3-day ACI Troubleshooting v3.1 bootcamp this week and I have to say, even though I do not get involved in actual implementation after the architecture and design, it is always valuable to understand how things (can) break and ways to troubleshoot. Here are some notes I put together:

Fabric Discovery

I learned that show lldp neighbors can save lives when proposed diagram does not match physical topology. Mapping serial number to node ID and name is a must before and during fabric discovery. The acidiag fnvread is also very helpful during the process.

Access Policies

For any endpoint connected, verification can be done top down, bottom up, or randomly, but regardless of the methodology, always make sure the policies are all interconnected. I like the top down approach, starting with switch policies (including VPC explicit groups), switch profiles, then interface policies and profile followed by policy groups. This is where all policies need to be in place (ie.: CDP, LLDP, port-channel mode) and most importantly, association to a AEP, which in turn needs to be associated to a domain (physical, VMM, L2, L3) and a VLAN pool followed by a range. If they are all interconnected, the AEP is bridging everything, then comes the logical part of the fabric.

I can only imagine what a missing AEP association can do in a real world deployment.

L2 Out

By extending a bridge domain to an external layer 2 network, a contract is required on the L2 Out (external EPG), that is known. Now, assuming this is a no-filter contract, it can be either a provider or consumer, as long as the EPG associated to the bridge domain being extended also has a matching contract, that is, if the L2 Out has a consumer contract, the associated EPG needs to have a provider contract. If L2 Out has a provider contract, then the EPG needs a consumer contract. In short, everytime I think I finally nailed the provider and consumer behavior, I learn otherwise.

L3 Out

Assuming all access policies are in place, in a OSPF connection, the same traditional checks are required, from MTU to network type. If the external device is using SVI, network broadcast is required on the OSPF interface profile for the L3 Out. I had point-to-point for a while. This is probably basics, but sometimes one can spend considerable time checking unrelated configuration.

Static Port (Binding)

Basically the solution for any connectivity issue from endpoints behind a VMM domain. I have seen it working with and without static binding of VLANs. In the past, I would associate this with the vSwitch policies, where as long as the hypervisor sees the leaf on the topology under virtual networking, no static binding was needed. Not the case anymore. The show vpc extended is the way to show the active vlans passing through from leaf to the host.

API Inspector

It is the easiest way to confirm specifics for API calls. With Postman, it is just a matter of copy and paste of the method, URL and payload while having the inspector running in the background for a specific configuration via GUI.

VMM AVE

Very similar process as deploying a distributed virtual switch, only that it needs a VLAN or VXLAN mode defined. If running VXLAN encapsulation, a multicast address is required along with a multicast pool, as well as a firewall mode. All the rest of the configuration is the same as far as adding vCenter credentials and specifying the Data Center name and IP address. After doing the process a few times without any success, and AVE not getting pushed to vCenter, I enabled infra-vlan on the AEP towards the host, which is a requirement when running VXLAN, and there it goes.

Follow-up

The official ACI troubleshooting e-book has screenshots based on earlier versions but is still relevant as the policy model did not change. For most updated troubleshooting tools or tips, the BRKACI-2102 ACI Troubleshooting session from Cisco Live is recommended.

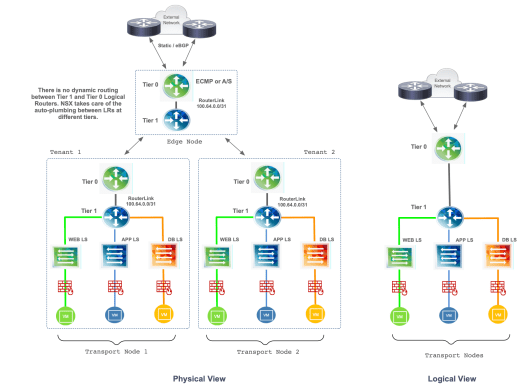

Figure 1 – Conceptual Design

Figure 1 – Conceptual Design

You must be logged in to post a comment.